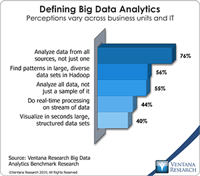

Teradata continues to expand its information management and analytics technology for big data to meet growing demand. My analysis last year discussed Teradata’s approach to big data in the context of its distributed computing and data architecture. I recently got an update on the company’s strategy and products at the annual Teradata analyst summit. Our big data analytics research finds that a broad approach to big data is wise: Three-quarters of organizations want analytics to access data from...

Read More

Topics:

Big Data,

Teradata,

Operational Performance Management (OPM),

Analytics,

Business Analytics,

Business Intelligence,

Cloud Computing,

Information Management,

NoSQL,

Business Performance Management (BPM),

Customer Performance Management (CPM),

Financial Performance Management (FPM),

Information Applications (IA),

Information Management (IM),

IT Performance Management (ITPM),

JSON,

Sales Performance Management (SPM),

Supply Chain Performance Management (SCPM),

UDA,

Workforce Performance Management (WPM)

I had the pleasure of attending Cloudera’s recent analyst summit. Presenters reviewed the work the company has done since its founding six years ago and outlined its plans to use Hadoop to further empower big data technology to support what I call information optimization. Cloudera’s executive team has the co-founders of Hadoop who worked at Facebook, Oracle and Yahoo when they developed and used Hadoop. Last year they brought in CEO Tom Reilly, who led successful organizations at ArcSight, HP...

Read More

Topics:

Big Data,

Teradata,

Zoomdata,

Business Intelligence,

Cloudera,

Hortonworks,

IBM,

Location Intelligence,

Operational Intelligence,

Oracle,

Hive,

Impala,

Information Applications (IA),

Information Management (IM),

IT Performance Management (ITPM),

Strata+Hadoop

At this year’s annual SAP user conference, SAPPHIRE, the technology giant showed advances in its cloud and in-memory computing efforts. It has completed the migration of its conventional application suite and portfolio of tools to operate on SAP HANA, its in-memory computing platform, and made improvements in its cloud computing environment, SAP HANA Enterprise Cloud. The last time I analyzed SAP HANA was when it won our firm’s 2012 Overall IT Technology Innovation Award. Now HANA has been...

Read More

Topics:

Big Data,

Predictive Analytics,

SAP,

Social Media,

Teradata,

Mobile Technology,

Operational Performance Management (OPM),

Business Analytics,

Business Collaboration,

Business Intelligence,

CIO,

Cloud Computing,

Governance, Risk & Compliance (GRC),

HP,

Business Performance Management (BPM),

CFO,

CMO,

Customer Performance Management (CPM),

Financial Performance Management (FPM),

Information Applications (IA),

Information Management (IM),

SAP EPM,

SAP HANA,

SAP Lumira,

SAPPHIRE,

Supply Chain Performance Management (SCPM),

Tagetik,

Workforce Performance Management (WPM)

Teradata recently gave me a technology update and a peek into the future of its portfolio for big data, information management and business analytics at its annual technology influencer summit. The company continues to innovate and build upon its Teradata 14 releases and its new processing technology. Since my last analysis of Teradata’s big data strategy, it has embraced technologies like Hadoop with its Teradata Aster Appliance, which won our 2012 Technology Innovation Award in Big Data....

Read More

Topics:

Big Data,

MicroStrategy,

SAS,

Tableau,

Teradata,

Customer Excellence,

Operational Performance Management (OPM),

Analytics,

Business Analytics,

Business Intelligence,

CIO,

Cloud Computing,

In-Memory Computing,

Location Intelligence,

Operational Intelligence,

CMO,

Customer Performance Management (CPM),

Discovery,

Information Applications (IA),

Information Management (IM),

Intelligent Memory,

Teradata Aster,

Strata+Hadoop

Business is starting to realize that taking advantage of big data is not just technically feasible but affordable by organizations of all sizes. However, as outlined in our agenda on big data and information optimization, the technology must be engineered to the information needs of business. Hortonworks has been steadily advancing its big data technology called Hadoop and contributing its developments back to the Apache Software Foundation for a range of projects. The company performs...

Read More

Topics:

Big Data,

Microsoft,

Talend,

Teradata,

Simba,

Strata Conference,

Business Analytics,

Cloud Computing,

Hortonworks,

Informatica,

HDP,

Hive,

Information Applications (IA),

Information Management (IM),

Tez,

Strata+Hadoop

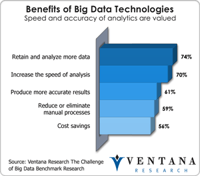

Using Hadoop just got easier, thanks to Teradata’s introduction of SQL-H, a new query interface to analyze data from Hadoop. Most Hadoop access methods require preprocessing and staging of data from the Hadoop Distributed File System (HDFS) using technologies such as MapReduce. These approaches require new skills and technologies, introducing more time and costs for users, which offset the benefits of Hadoop, which according to our big data benchmark research include increasing the speed of...

Read More

Topics:

Big Data,

Teradata,

SQL-H,

Business Analytics,

Business Intelligence,

CIO,

Hortonworks,

Information Management,

Data,

Information Management (IM),

Strata+Hadoop